|

11/28/2023 0 Comments Mariadb varchar length

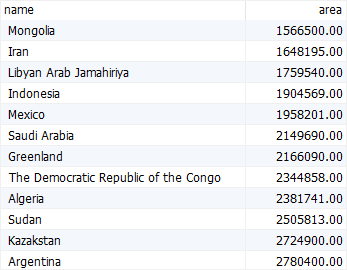

Update: Because the exploding popularity of variable-length strings, for example, with the usage of emojis, Oracle has been pushing for improved performance for those cases. Try to be conservative because, unless the table is too large, you can always change the definition later. A VARCHAR(100) is better than VARCHAR(255) (although a VARCHAR(20) would be better), even if you do not know the exact length. So the general piece of advice is to use the smallest type possible, because it can potentially create performance or management problems otherwise. Mysql> SELECT CHAR_LENGTH(REPEAT('ñ', 255)) For example REPEAT('ñ', 255) has more than 2^255 bytes in utf8, so it would require more than 1 byte for storing its length: mysql> SELECT LENGTH(REPEAT('ñ', 255)) Last but not least, as has reminded me, more than 1 byte for the length may be required even if you are using VARCHAR(255), because the definition is in characters, while the length stores bytes. See, for example: error 1118: row size too large. This caused some weirdness between the COMPACT and DYNAMIC formats. That has some consequences in performance (sometimes good, sometimes bad, depending on the usage) that cannot be ignored. So, it is very likely that you won't be able to index fully a VARCHAR(255) field (assuming you use utf8 or any other variable length-encoding).Īdditionally, the maximum inline row size for InnoDB is half a page (around 8000 bytes), and variable-lenght fields like BLOB or varchar, can be stored off-page if they do not fit on the half-page. Index size is restricted to 3072 bytes and single column indexes, to 767 bytes*. It has some consequences for InnoDB, too. All of that because of a poor selection of its defined data type (independently of the contents). Not only this causes unnecessary memory stress, it may provoke the actions to be performed on disk, potentially slowing it down thousands of times. That is almost 1GB on a 1 million table -only for the VARCHAR. If you can think about the wasted space, if we are using MySQL's 'utf8' charset encoding, MEMORY will reserve 2 bytes for the length + 3 * 255 bytes per row (for values that may only take a few bytes on InnoDB). Should we care about those other engines? Yes, we should, because even if we do not use them directly, MEMORY tables are very commonly used for intermediate results (temporary tables on memory), and as the results are not known beforehand, the table has to be created with the maximum size possible - VARCHAR(255) if that is our type. MyISAM has a fixed row size format, and MEMORY tables are always fixed in size. While InnoDB may store a varchar in a dynamic way, that is not true for other engines.

So, that means that we should always do VARCHAR(255), shouldn't we? No. That is not true with VARCHAR(256), because then 2 bytes (at least) for the length are always required. And that was possible, because the actual data was not affected by that change. In fact, if I remember correctly, I recall someone modifying the data dictionary with an hexadecimal editor in order to change something like a VARCHAR(50) into a VARCHAR(100), so it could be done dynamically (normally, that requires a table reconstruction). In the standard row formats for InnoDB and MyISAM (dynamic/compact) a VARCHAR(50) and a VARCHAR(255) will store the string text in the same way- 1 byte for the length and the actual string with between 1 and 4 bytes per character (depending on the encoding and the actual character stored). This is a very common "exam/interview question".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed